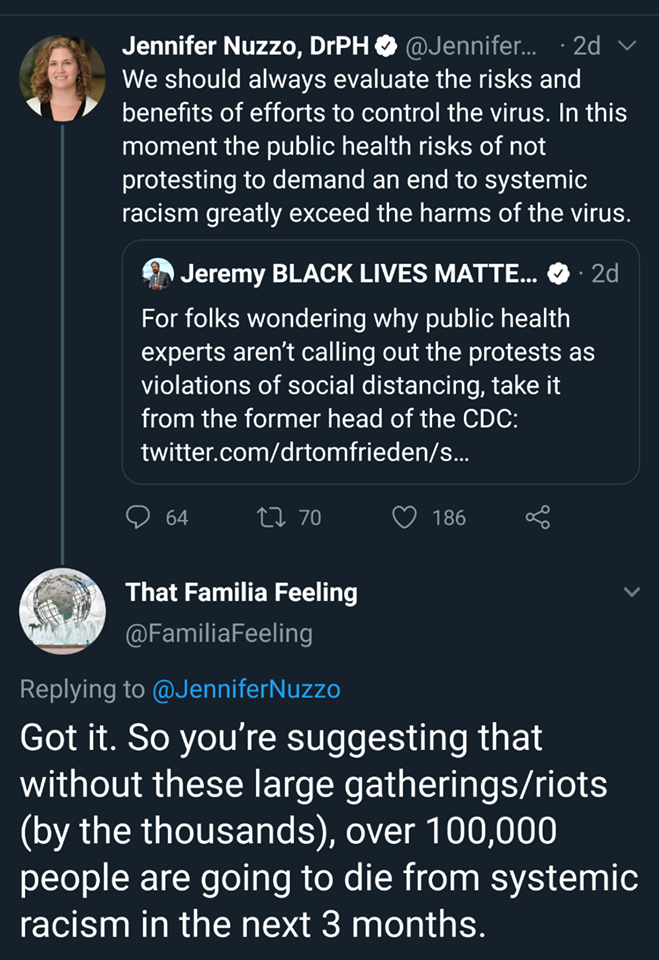

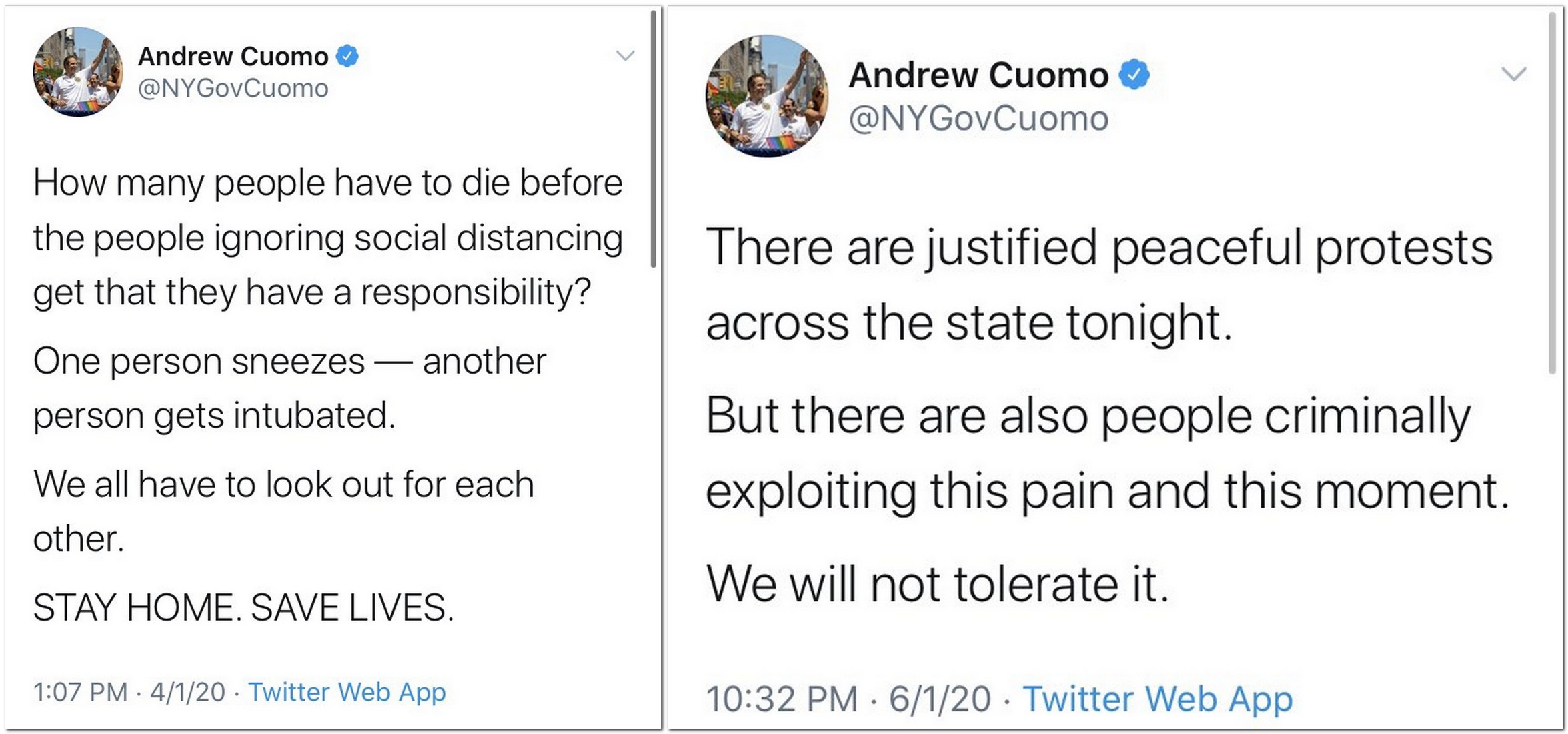

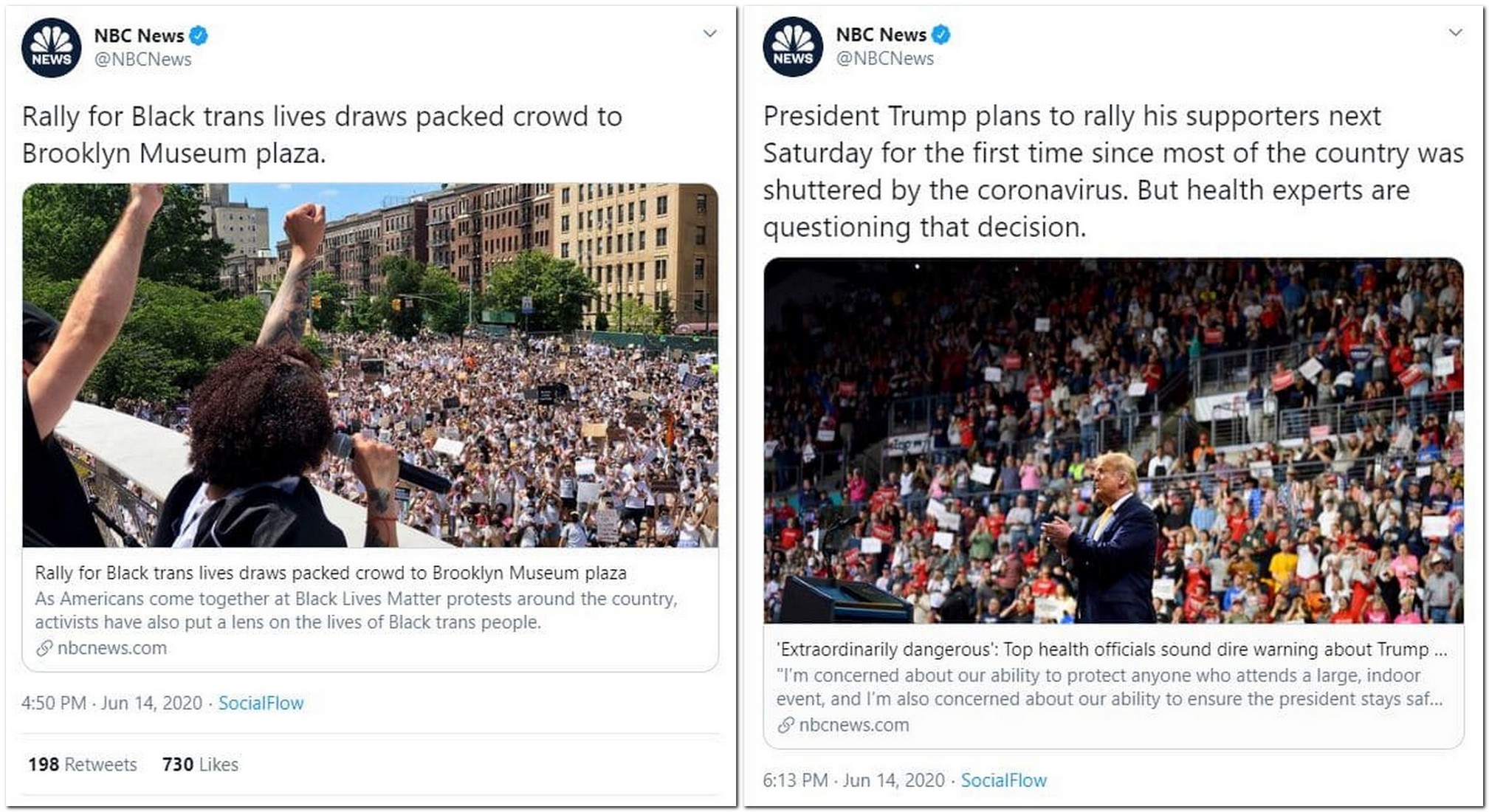

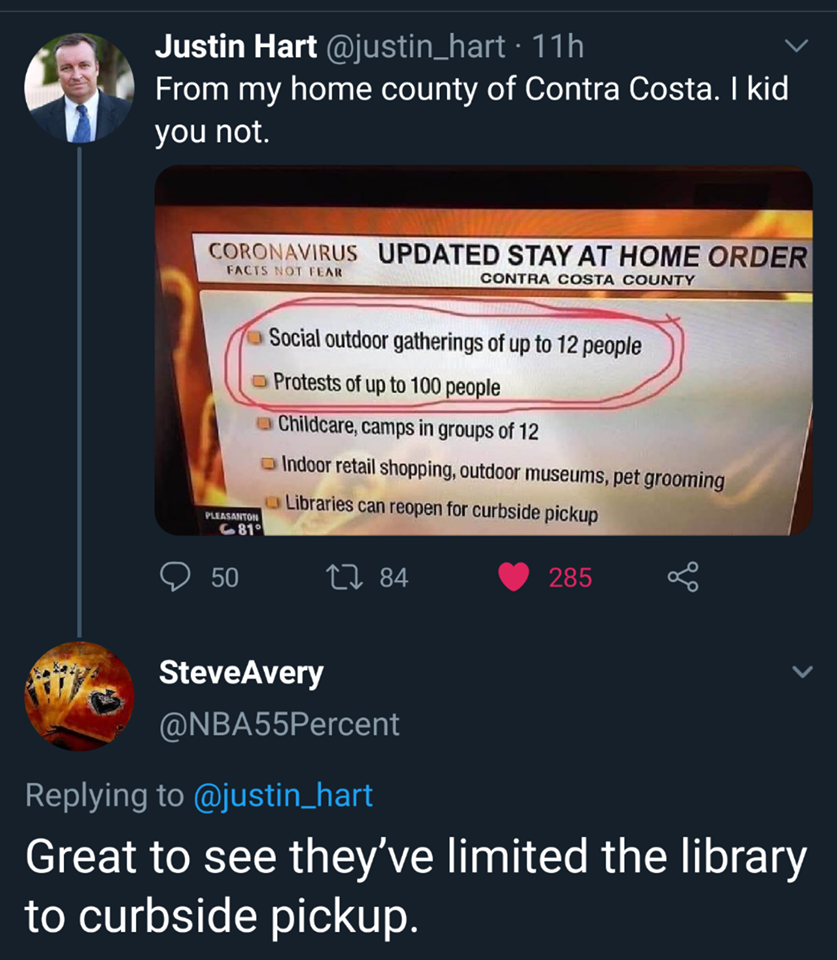

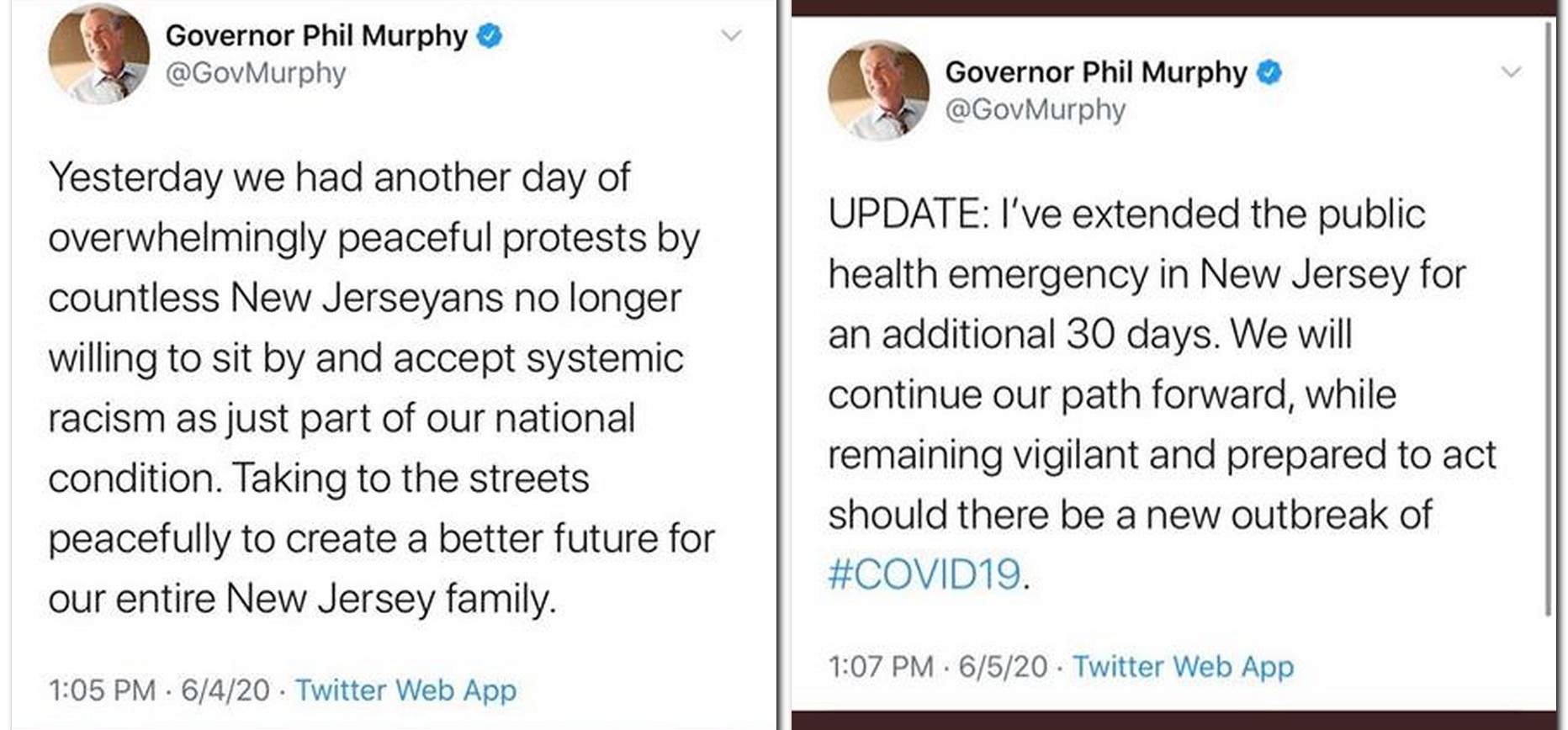

You’ve probably seen something like this meme in your own social media network feeds.

I’m gonna do two things to this meme. First: debunk it. Not because it’s all that notable, but because it’s a pretty typical example of something scary and nasty in our society. And that’s what we’re going to get to second: zooming out from this particular specimen to the whole species.

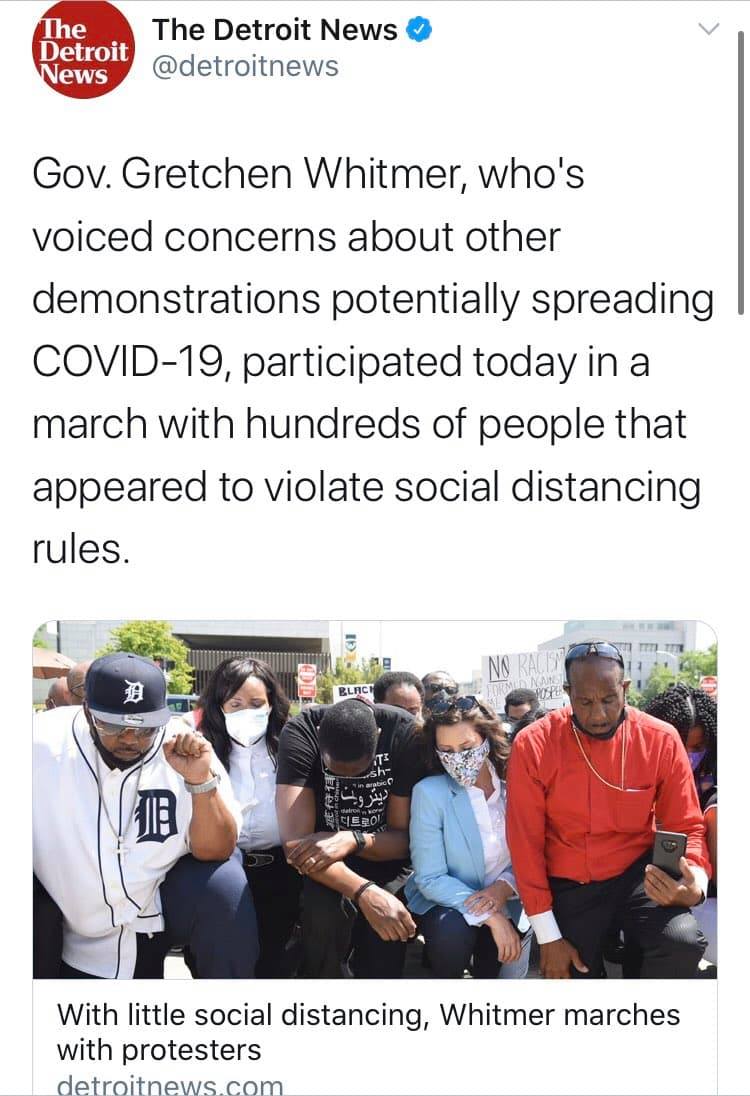

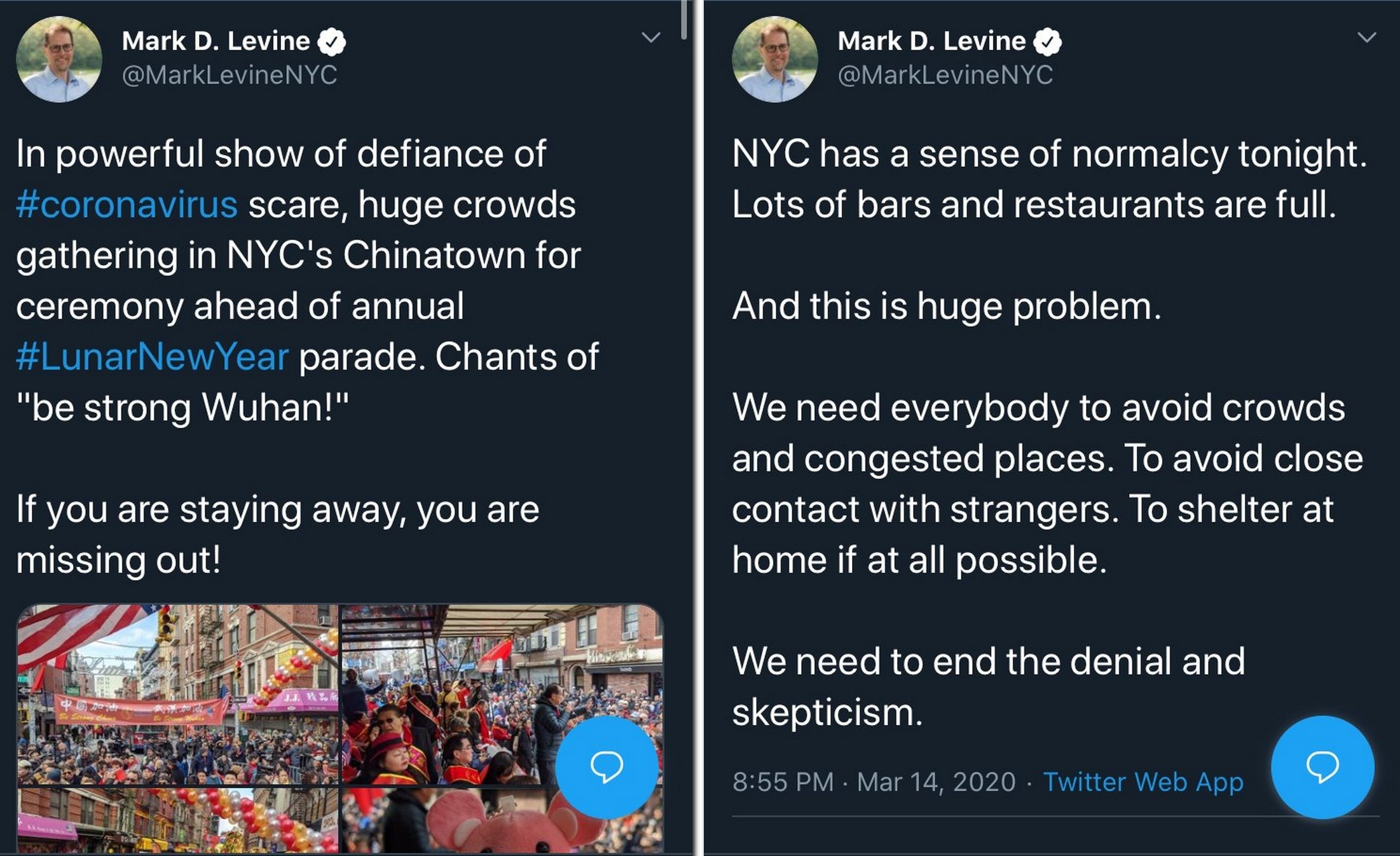

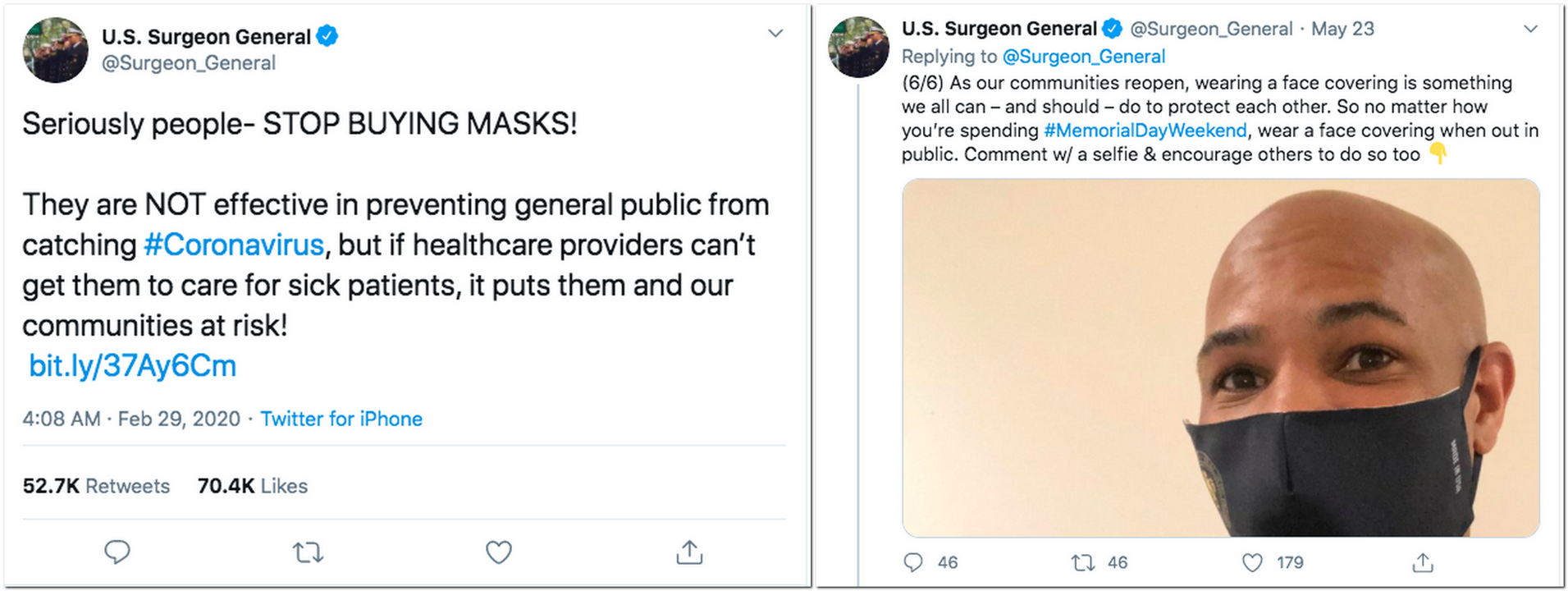

This meme has the appearance of being some kind of insight or realization into American politics in the context of an important current event (the pandemic), but all of that is just a front. There is no analysis and there is no insight. It’s just a pretext to deliver the punchline: conservatives are selfish and bad.

You can think of the pseudo-argument as being like the outer coating on a virus. The sole purpose is to penetrate the cell membrane to deliver a payload. It’s a means to an end, nothing more.

Which means the meme, if you ignore the candy coating, is just a cleverly packaged insult.

You see, conservatives don’t object to pandemic regulations because they would rather watch their neighbors die than shoulder a trivial inconvenience. They object to pandemic regulations (when they do; I think the existence of objections is exaggerated) because Americans in general and conservatives in particular have an anti-authoritarian streak a mile wide. Anti-authoritarianism is part of who we are. It’s not always reasonable or mature, but then again, it’s not a bad reflex to have, all things considered.

One of the really clever things about the packaging around this insult is that it’s kind of self-fulfilling. It accuses conservatives of being stubborn while it also insults them. What happens to people who are already being a little stubborn if you start insulting them? In most cases, they get more stubborn. Which means every time a conservative gets mad about this meme, a liberal spreading it can think, “Yeah, see? I knew I was right.”

Oh, and if incidentally it happens to actually discourage mask use? Oh well. That’s just collateral damage. Because people who spread memes like this care more about winning political battles than epidemiological ones.

Liberals who share this meme are guaranteed to get what they really want: that little frisson of superiority. Because they care. They are willing to sacrifice. They are reasonable. So reasonable that they are happy to titillate their own feeling of superiority even if it has the accidental side effect of, you know, undermining compliance with those rules they care so much about.

I’m being a little cynical here, but only a little. This meme is just one example of countless millions that all have the same basic function: stir controversy. And yes, there are conservatives analogs to this liberal meme that do the exact same thing. I don’t see as many of them because I’m quicker to mute fellow conservatives who aggravate me than liberals.

Why did we get here?

You can blame the Russians, if you like. The KGB meddled with American politics as much as they could for decades before the fall of the USSR and Putin was around for that. Why would the FSB (contemporary successor) have given up the old hobby? But the KGB wasn’t ever any good at it, and I’m skeptical that the FSB has cracked the code. I’m sure their efforts don’t help, but I also don’t think they’re largely to blame.

We’re doing this to ourselves.

The Internet runs on ads, and that means the currency of the Internet is attention. You are not the customer. You are the commodity. That’s not just true of Facebook and it’s not just a slogan. It’s the underlying reality of the Internet, and it sets the incentives that every content producer has to contend with if they want to survive.

The way to harvest attention is through engagement. Every content producer out there wants to hijack your attention by getting you engaged in what they’re telling you. There are a lot of ways to do this. Clickbait headlines hook your curiosity, attractive models wearing little clothes snag your libido, and so on. But the king of engagement seems to be outrage, and there’s an insidious reason why.

Other attention grabbers work on only a select audience at a time. Other than bisexuals, attractive male models will grab one half of the audience and attractive female models will grab the other half, but you have to pick either / or.

But outrage lets you engage two audiences with one piece of content. That’s what a meme like this one does, and it’s why it’s so successful. It infuriates conservatives while at the same time titillating liberals. (Again: I could just as easily find a conservative liberal that does the opposite.)

When you realize that this meme is actually targeting conservatives and liberals, you also realize that the logical deficiency of the argument isn’t a bug. It’s a feature. It’s just another provocation, the way that some memes intentionally misspell words just to squeeze out a few more interactions, a few more clicks, a few more shares. If you react to this meme with an angry rant, you’re still reacting to this meme. That means you’ve already lost, because you’ve given away your attention.

A lot of the most dangerous things in our environment aren’t trying to hurt us. Disease and natural disasters don’t have any intentions. And even the evils we do to each other are often byproducts of misaligned incentives. There just aren’t that many people out there who really like hurting other people. Most of us don’t enjoy that at all. So the conventional image of evil–mustache-twirling super-villians who want to murder and torture–is kind of a distraction. The real damage isn’t going to come from the tiny population of people who want to cause harm. It’s going to come from the much, much, much larger population of people who don’t have any particular desire to do harm, but who aren’t really that concerned with avoiding it, either. These people will wreck the world faster than anyone else because none of them are doing that much damage on their own and because none of them are motivated by malice. That makes it easier for them to rationalize their individual contribution to an environment that, in the aggregate, becomes extremely toxic.

At this point, I’d really, really like everyone reading this to take a break and read Scott Alexander’s short story, “Sort by Controversial“. Go ahead. I’ll wait.

Back? OK, good, let’s wrap this up. The meme above is a scissor (that’s from Alexander’s story, if you thought you could skip reading it). The meme works by presenting liberals with an obviously true statement and conservatives with an obviously false statement. For liberals: You should tolerate minor inconveniences to save your neighbors. For conservatives: You should do whatever the government tells you to do without question.

That’s the actual mechanism behind scissors. It’s why half the people think it’s obviously true and the other half think it’s obviously false. They’re not actually reacting to the same issue. But they are reacting to the same meme. And so they fight, and–since they both know their position is obvious–the disagreement rapidly devolves.

The reality is that most people agree on most issues. You can’t really find a scissor where half the population thinks one thing and half the population thinks the other because there’s too much overlap. But you can present two halves of the population with subtly different messages at the same time such that one half viscerally hates what they hear and the other half passionately loves what they hear, and–as often as not–they won’t talk to each other long enough to realize that their not actually fighting over the same proposition.

This is how you destroy a society.

The truth is that it would be better, in a lot of ways, if there were someone out there who was doing this to us. If it was the FSB or China or terrorists or even a scary AI (like a nerdier version of Skynet) there would be some chance they could be opposed and–better still–a common foe to unite against.

But there isn’t. Not really. There’s no conspiracy. There’s no enemy. There’s just perverse incentives and human nature. There’s just us. We’re doing this to ourselves.

That doesn’t necessarily mean we’re doomed, but it does mean there’s no easy or quick solution. I don’t have any brilliant ideas at all other than some basic ones. Start off with: do no harm. Don’t share memes like this. To be on the safe side, maybe just don’t share political memes at all. I’m not saying we should have a law. Just that, individually and of our own free will, we should collectively maybe not.

As a followup: talk to people you disagree with. You don’t have to do it all the time, but look for opportunities to disagree with people in ways that are reasonable and compassionate. When you do get into fights–and you will–try to reach out afterwards and patch up relationships. Try to build and maintain bridges.

Also: Resist the urge to adopt a warfare mentality. War is a common metaphor–and there’s a reason it works–but if you buy into that way of thinking it’s really hard not to get sucked into a cycle of endless mutual radicalization. If you want a Christian way of thinking about it, go with Ephesians 6:12

For our struggle is not against enemies of blood and flesh, but against the rulers, against the authorities, against the cosmic powers of this present darkness, against the spiritual forces of evil in the heavenly places.

There are enemies, but the people in your social network are not them. Not even when they’re wrong. Those people are your brothers and your sisters. You want to win them over, not win over them.

Lastly: cultivate all your in-person friendships. Especially the random ones. The coworkers you didn’t pick? The family members you didn’t get to vote on? The neighbors who happen to live next door to you? Pay attention to those little relationships. They are important because they’re random. When you build relationships with people who share your interests and perspectives you’re missing out on one of the most fundamental and essential aspects of human nature: you can relate to anyone. Building relationships with people who just happen to be in your life is probably the single most important way we can repair our society, because that’s what society is. It’s not the collection of people you chose that defines our social networks, it’s the extent to which we can form attachments to people we didn’t choose.

What are the politics of your coworkers and family and neighbors? Who cares. Don’t let politics define all your relationships, positive or negative. Find space outside politics, and cherish it.

Times are dark. They may yet get darker, and none of us can change that individually.

But by looking for the good in the people who are randomly in your life, you can hold up a light.

So do it.